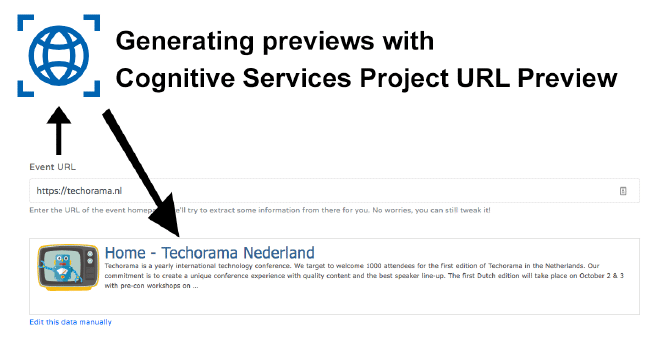

URL Preview with Cognitive Services Project URL Preview

For my project cfp.exchange, I was struggling a bit on how to generate a good preview for the URL that was entered. Then I learned about the Cognitive Services Labs and more specifically, the Project URL Preview which seems to do exactly what I wanted. In this post, I will share my findings on this new and still experimental service.

Cognitive Services… Labs? #

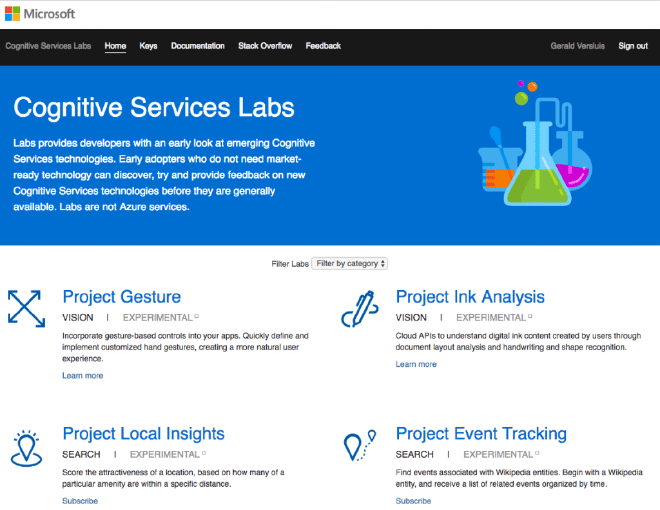

Yes! The Azure cognitive services have been out there for a while now. I tend to describe them as poor-mans AI. You can leverage the power of awesome AI functionalities, but they are available to you through a simple REST API that anyone can understand. There are a lot of useful services like computer vision, text analysis, emotion and face analysis and much, much more. If you didn’t see it yet, check it out quickly.

But then there is more! The team(s) behind these services aren’t sitting still. They are working on more awesomeness. Some might come out, some might disappear or change, but they are available in an early stage at the Cognitive Services Labs. At the time of writing, you can see things like Project Gesture which lets you handle hand gestures in an easy way. Or the Anomaly Finder, which allows you to easily find anomalies in a supplied dataset. Pretty awesome stuff which you can find here: https://labs.cognitive.microsoft.com/.

Note that projects might change over time and are experimental. Also, some projects can easily be joined by just requesting a key, others might involve some kind of request form first. And lastly, the response times and overall performance might be a bit different than what you are used to from the other Azure services. Nevertheless, don’t let this stop you from playing with this goodness!

Generating a URL Preview #

Now for a bit of background. In an earlier post about generating slugs, I already talked about a side-project: CFP Exchange. The way I envisioned submitting a CFP should be quick and easy. That is why I wanted a functionality like Facebook or Twitter that fetches some data from the entered URL like a relevant image, the page title, and a description. I did some kind of attempt to write my own parser/scraper and it gets results most of the time but they are not always great. A lot of times though, it doesn’t get any results as well. Therefore, I was looking for an alternative or better way but I couldn’t think of anything other than to just extend my scraper. Extend it by searching for more ways to extract the right image and description from different tags.

Then, I learned about the URL Preview project. This basically does what I tried to do myself, but of course, better! This particular project is very accessible, you can just request a key and you can start making requests.

The URL Preview API #

The API is pretty straight-forward, you just call the one endpoint that is available now with the website you want to preview and get back a JSON response.

A call to https://api.labs.cognitive.microsoft.com/urlpreview/v7.0/search?q=, for instance, will result in this:

Yay! My blog is family friendly! So, you can also use this to get some indication if the URL might contain sensitive material. But besides that, all the information is in here to build a meaningful URL preview. Let’s see how I can incorporate this into my own project.

Showing the URL Preview on CFP Exchange #

The functionality I had in place was doing this:

- A user enters a URL

- When the URL is valid, retrieve metadata and give it back in a model

- Model is dissected in JavaScript and results are placed in the right controls

Really the only thing I have to do is swap out the implementation of my parsing engine and return the cognitive services results in my own model. Then it should already work!

There is no NuGet package or library available yet for this service and there is really no need for it right now. It is just a call to a single URL with a rather simple return object. For now, I can suffice with the HttpClient.

This is the code I now implemented to retrieve the preview:

Remember, the MetaInformation model was already there and hold all the information I need. So, in the existing static helper class, I had for this I have just added this one alongside. I can now easily swap between the two without having to touch any other code. The way I extract the JSON might look a bit funny. What you see happening a lot is that people, including myself, would first get the JSON string and then deserialize it. This can potentially put a lot of pressure on the memory of your machine. Looking into this after reading a discussion on Twitter, I found this page on performance: https://www.newtonsoft.com/json/help/html/Performance.htm, and I thought I would give it a try.

Calling this method isn’t that interesting so I won’t handle it in much detail here. If you are interested in the whole solution, check this out as a starting point: https://github.com/jfversluis/CfpExchange/blob/master/CfpExchange/Controllers/CfpController.cs#L52

The results #

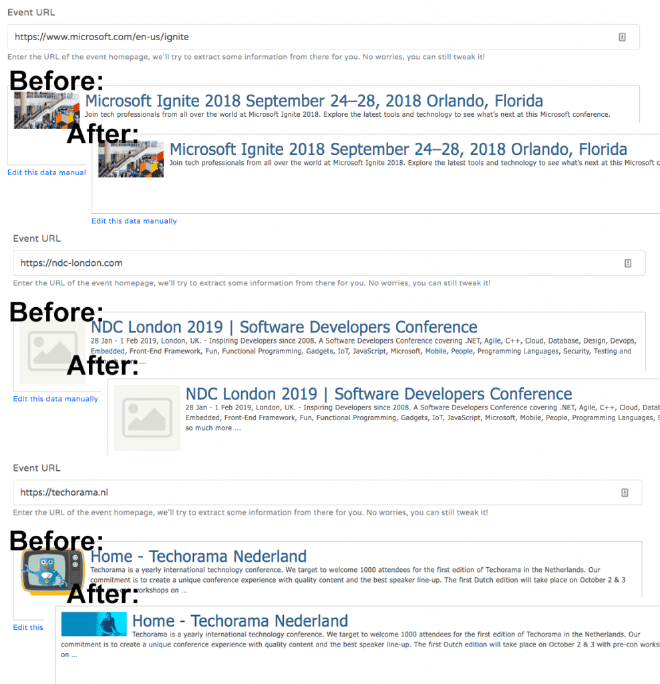

After implementing this code I had to see if it made any real difference with my own code. It was a bit unexpected to see that it didn’t make as much impact as I hoped. Underneath you can see a couple of before/after scenarios. Before is with my own “algorithm”, the after is with the URL Preview service.

The only difference it made was with the bottom one, Techorama, where you see it picked up a different image. Which isn’t even for the better in my opinion.

I have tried some other entries that are in my database right now, specifically the ones where no images were picked up. Unfortunately, it didn’t do much better there as well. In some cases, it did return an image, but only as the relative URL, so the image is broken.

In Closing #

Overall, I didn’t win much it seems. Of course, I could have saved myself a lot of trouble if I knew about this service on beforehand. So, if you need this and don’t have any code yourself yet, check this out and it is pretty sweet!

The fact that my own code came up with mostly the same results surprised me a little. The service is still experimental, so I have no doubt that if they continue working on it, it will be better. But right now I did not implement it just yet as a replacement to my own code. I did upload the code, but just didn’t call it. You can see the URL Preview and my own implementation side-by-side, here: https://github.com/jfversluis/CfpExchange/blob/master/CfpExchange/Helpers/MetaScraper.cs

I will, in time, check out this service again and hope it will surprise me again, for the better this time. To help make it better I will start providing some feedback to the team on my findings.

Next on my wishlist is checking out the Project Gesture, but I will need a special camera for that… Stay tuned!